Análisis Estático

Un análisis estático verifica el código fuente, sin cargar los binarios. Es algo que debemos hacer manualmente antes del pipeline y en varias etapas del pipeline para comprobar.

- Verificar el código respecto a las reglas.

- Algunas reglas ya existen y son proporcionadas por la comunidad colaborativa.

- Podemos crear nuestras propias reglas para parar los pipelines cuando sea necesario sabiendo que otras reglas serán comprobadas como por ejemplo el OPA.

Podemos verificar por ejemplo si:

- Los límites de recursos para los pods fueron definidos. Siempre es bueno que lo sean para evitar que un pod crezca desenfrenadamente.

- Si la service account usada no es la predeterminada, ya que no es bueno que lo sea para granular los permisos.

- Estamos referenciando datos sensibles a través de secrets. No debemos tener valores sensibles codificados directamente en los manifiestos como variables de entorno o ConfigMaps.

- Si los Dockerfiles tampoco poseen datos sensibles.

- Etc.

Claro que todo depende del caso de uso del proyecto y de la empresa.

Si vamos a poner análisis estático en un pipeline de CI/CD ¿dónde podemos poner estos análisis? Observa que el análisis estático está en un momento antes del Deploy. A partir de este momento tendremos otro análisis, el de políticas.

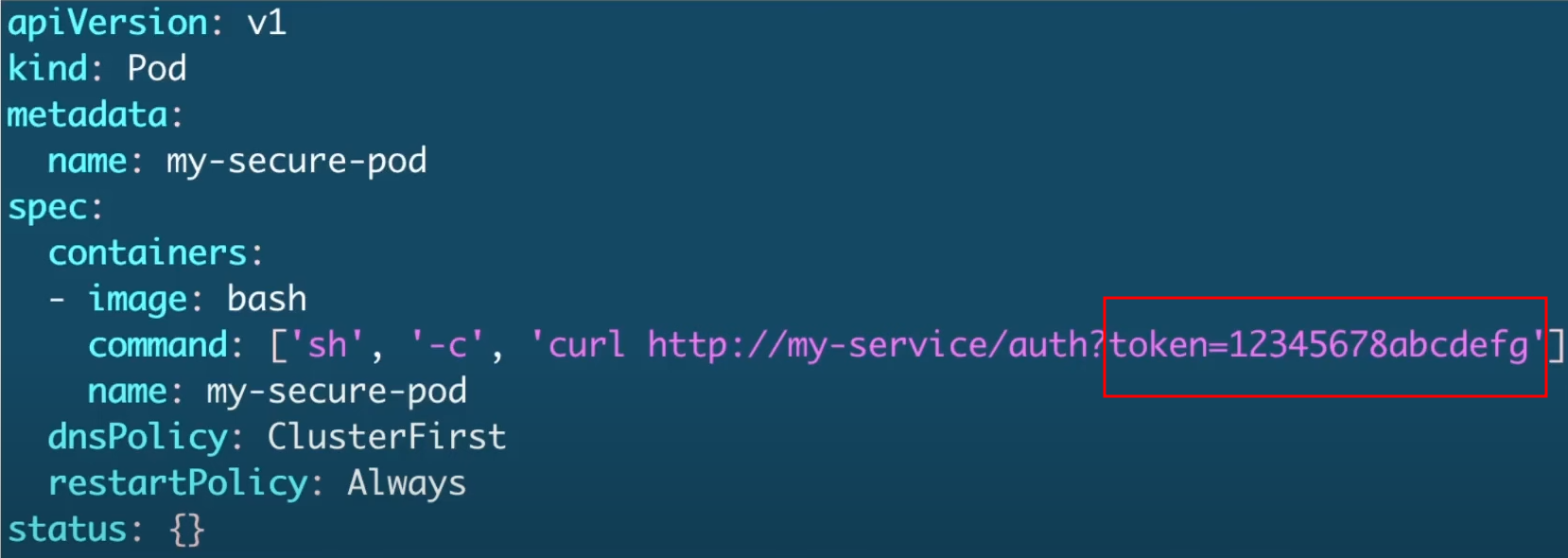

Vamos a analizar el manifiesto abajo manualmente que sería la primera etapa de un análisis estático.

Ya podemos observar que estamos pasando valores sensibles codificados directamente en el manifiesto y nunca debe ser hecho.

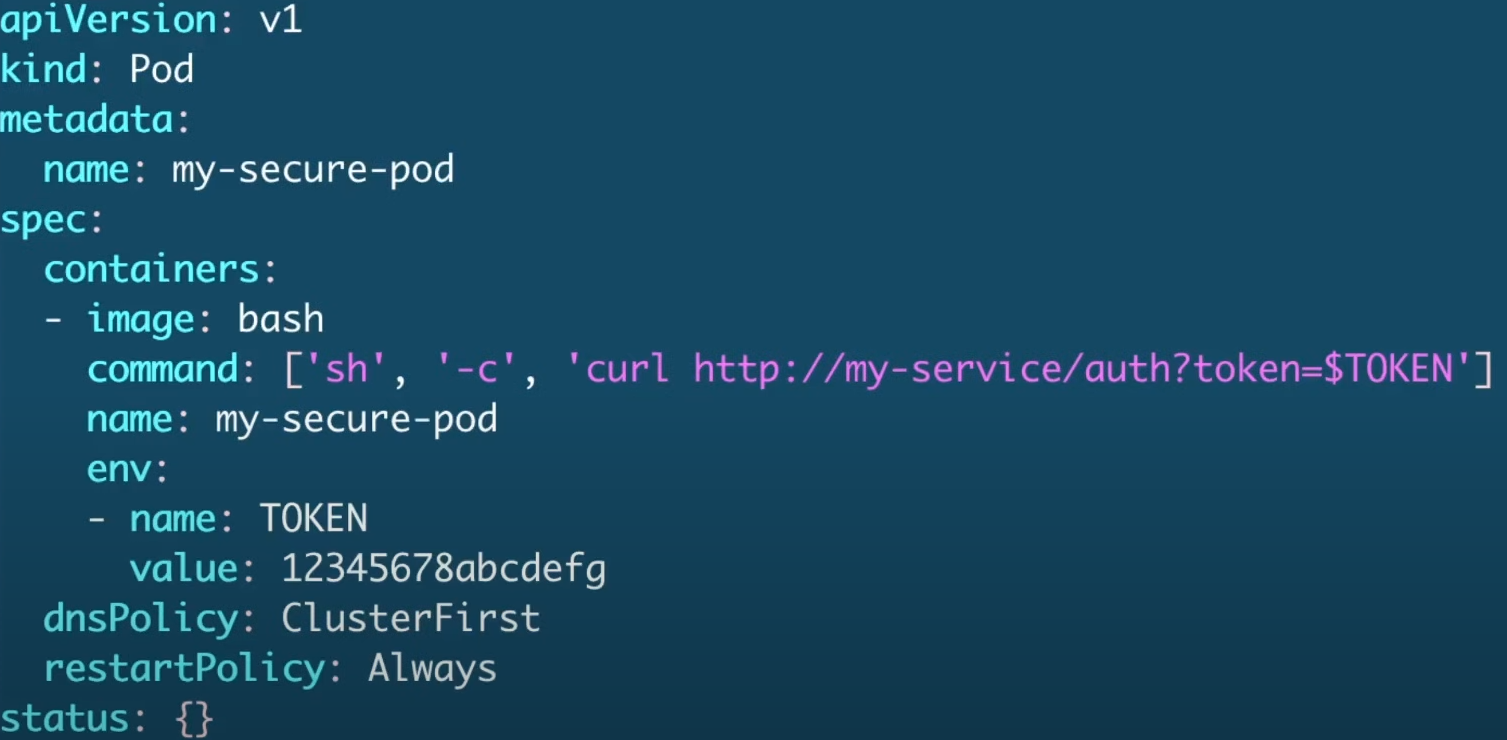

Esto solo cambia la referencia, pero claramente está expuesto.

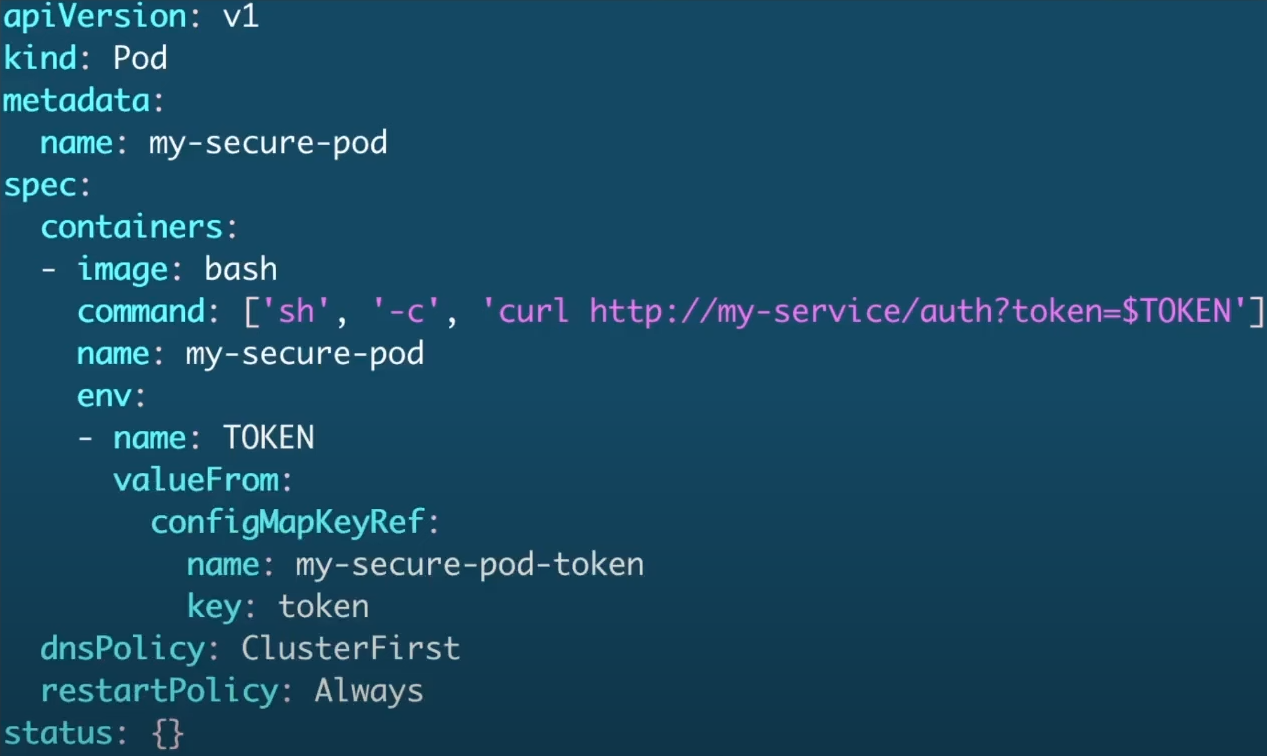

Esto ya parece mejor, pero aún no es el mejor escenario, ya que podríamos usar secrets. ConfigMaps no son secrets, parece, pero no lo es!

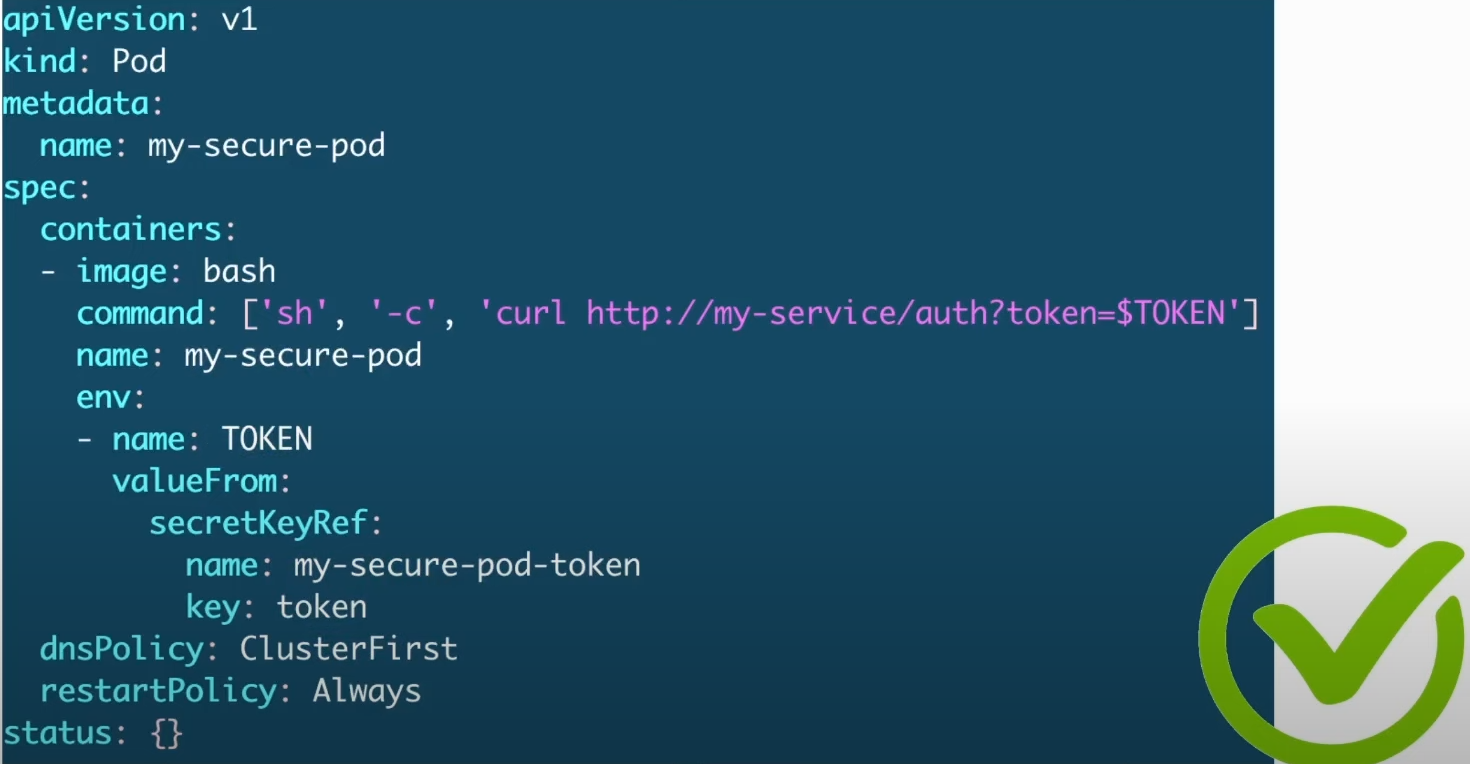

Y este sería el mejor escenario. Secrets son almacenados con mejor seguridad que ConfigMaps y pueden ser cifrados. Tenemos también otras opciones para gestión de secrets de forma externa, pero en los límites del CKS es esto.

Podemos poner plugins en nuestros IDEs que pueden facilitar durante el desarrollo, pero no para el examen del CKS.

Kubesec

Kubesec es una herramienta open source que ayuda en el análisis de riesgo para los recursos de Kubernetes.

Hace un análisis estático del manifiesto y confronta las mejores prácticas de seguridad para darnos insights de mejora. La herramienta no hace la corrección, apenas verificación y genera informe.

Podemos utilizar kubesec a través de:

- Binario (local o pipelines)

- Docker Container (local o pipelines).

- Kubectl plugins

- Una vez instalado el binario podemos usarlo por dentro de kubectl.

- Admission Controller (kubesec-webhook)

Sabiendo esto podemos usarla en varias etapas, desde el momento que estamos desarrollando y durante el CI y CD.

Vamos a probar un análisis sin instalar el binario, apenas con docker.

root@cks-master:~# k run nginx --image=nginx -oyaml --dry-run=client > nginx.yaml

root@cks-master:~# cat nginx.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: nginx

name: nginx

spec:

containers:

- image: nginx

name: nginx

resources: {}

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}

# Observa que vamos a analizar los archivos sin crear el pod, no está ejecutando en el sistema.

# Ejecutando con docker pasando el nginx.yaml como standard input.

root@cks-master:~# docker run -i kubesec/kubesec:512c5e0 scan /dev/stdin < nginx.yaml

Unable to find image 'kubesec/kubesec:512c5e0' locally

512c5e0: Pulling from kubesec/kubesec

c87736221ed0: Pull complete

5dfbfe40753f: Pull complete

0ab7f5410346: Pull complete

b91424b4f19c: Pull complete

0cff159cca1a: Pull complete

32836ab12770: Pull complete

Digest: sha256:8b1e0856fc64cabb1cf91fea6609748d3b3ef204a42e98d0e20ebadb9131bcb7

Status: Downloaded newer image for kubesec/kubesec:512c5e0

[

{

"object": "Pod/ngix.default",

"valid": true,

"message": "Passed with a score of 0 points",

"score": 0,

"scoring": {

# Algunos consejos...

"advise": [

{

"selector": "containers[] .securityContext .readOnlyRootFilesystem == true",

"reason": "An immutable root filesystem can prevent malicious binaries being added to PATH and increase attack cost"

},

{

"selector": "containers[] .resources .limits .cpu",

"reason": "Enforcing CPU limits prevents DOS via resource exhaustion"

},

{

"selector": "containers[] .resources .requests .memory",

"reason": "Enforcing memory requests aids a fair balancing of resources across the cluster"

},

{

"selector": "containers[] .securityContext .runAsNonRoot == true",

"reason": "Force the running image to run as a non-root user to ensure least privilege"

},

{

"selector": "containers[] .resources .limits .memory",

"reason": "Enforcing memory limits prevents DOS via resource exhaustion"

},

{

"selector": ".spec .serviceAccountName",

"reason": "Service accounts restrict Kubernetes API access and should be configured with least privilege"

},

{

"selector": "containers[] .securityContext .runAsUser -gt 10000",

"reason": "Run as a high-UID user to avoid conflicts with the host's user table"

},

{

"selector": ".metadata .annotations .\"container.seccomp.security.alpha.kubernetes.io/pod\"",

"reason": "Seccomp profiles set minimum privilege and secure against unknown threats"

},

{

"selector": ".metadata .annotations .\"container.apparmor.security.beta.kubernetes.io/nginx\"",

"reason": "Well defined AppArmor policies may provide greater protection from unknown threats. WARNING: NOT PRODUCTION READY"

},

{

"selector": "containers[] .securityContext .capabilities .drop",

"reason": "Reducing kernel capabilities available to a container limits its attack surface"

},

{

"selector": "containers[] .resources .requests .cpu",

"reason": "Enforcing CPU requests aids a fair balancing of resources across the cluster"

},

{

"selector": "containers[] .securityContext .capabilities .drop | index(\"ALL\")",

"reason": "Drop all capabilities and add only those required to reduce syscall attack surface"

}

]

}

}

]

Implementando algunas mejoras añadiendo los recursos, service account y security context.

root@cks-master:~# vim nginx.yaml

root@cks-master:~# cat nginx.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: nginx

name: nginx

spec:

containers:

- image: nginx

name: nginx

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

securityContext:

runAsNonRoot: true

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}

root@cks-master:~# docker run -i kubesec/kubesec:512c5e0 scan /dev/stdin < nginx.yaml

[

{

"object": "Pod/nginx.default",

"valid": true,

"message": "Passed with a score of 6 points",

"score": 6, # La puntuación mejoró

"scoring": {

"advise": [

{

"selector": "containers[] .securityContext .readOnlyRootFilesystem == true",

"reason": "An immutable root filesystem can prevent malicious binaries being added to PATH and increase attack cost"

},

{

"selector": "containers[] .securityContext .capabilities .drop",

"reason": "Reducing kernel capabilities available to a container limits its attack surface"

},

{

"selector": "containers[] .securityContext .capabilities .drop | index(\"ALL\")",

"reason": "Drop all capabilities and add only those required to reduce syscall attack surface"

},

{

"selector": "containers[] .securityContext .runAsUser -gt 10000",

"reason": "Run as a high-UID user to avoid conflicts with the host's user table"

},

{

"selector": ".metadata .annotations .\"container.seccomp.security.alpha.kubernetes.io/pod\"",

"reason": "Seccomp profiles set minimum privilege and secure against unknown threats"

},

{

"selector": ".metadata .annotations .\"container.apparmor.security.beta.kubernetes.io/nginx\"",

"reason": "Well defined AppArmor policies may provide greater protection from unknown threats. WARNING: NOT PRODUCTION READY"

}

]

}

}

]

Vamos a mejorar más...

cat pod-nginx.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: nginx

name: nginx

spec:

serviceAccountName: nginx

containers:

- image: nginx

name: nginx

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

securityContext:

runAsNonRoot: true

runAsUser: 10001

readOnlyRootFilesystem: true

capabilities:

drop:

- ALL

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}

Verificando...

root@cks-master:~# docker run -i kubesec/kubesec:512c5e0 scan /dev/stdin < nginx.yaml

[

{

"object": "Pod/nginx.default",

"valid": true,

"message": "Passed with a score of 12 points",

"score": 12,

"scoring": {

"advise": [

{

"selector": ".metadata .annotations .\"container.seccomp.security.alpha.kubernetes.io/pod\"",

"reason": "Seccomp profiles set minimum privilege and secure against unknown threats"

},

{

"selector": ".metadata .annotations .\"container.apparmor.security.beta.kubernetes.io/nginx\"",

"reason": "Well defined AppArmor policies may provide greater protection from unknown threats. WARNING: NOT PRODUCTION READY"

}

]

}

}

]

Solo quedó el apparmor y seccomp que veremos más adelante en el curso. Vamos a aplicar para ver lo que conseguimos ejecutar.

# Creando el service account que vamos a usar

root@cks-master:~# k create sa nginx

serviceaccount/nginx created

root@cks-master:~# k apply -f nginx.yaml

pod/nginx created

root@cks-master:~# k get pods

NAME READY STATUS RESTARTS AGE

nginx 0/1 CrashLoopBackOff 1 (6s ago) 9s

# Vamos a buscar el problema

root@cks-master:~# k describe pod nginx

...

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready False

ContainersReady False

PodScheduled True

Volumes:

kube-api-access-dfr9z:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional: <nil>

DownwardAPI: true

QoS Class: Burstable

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 18s default-scheduler Successfully assigned default/nginx to cks-worker

Normal Pulled 17s kubelet Successfully pulled image "nginx" in 387ms (387ms including waiting). Image size: 71026652 bytes.

Normal Pulling 16s (x2 over 17s) kubelet Pulling image "nginx"

Normal Created 15s (x2 over 17s) kubelet Created container nginx

Normal Started 15s (x2 over 17s) kubelet Started container nginx

Normal Pulled 15s kubelet Successfully pulled image "nginx" in 357ms (357ms including waiting). Image size: 71026652 bytes.

Warning BackOff 14s (x2 over 15s) kubelet Back-off restarting failed container nginx in pod nginx_default(f9d3097e-95d4-4da2-a184-bb2e0d4907f4)

root@cks-master:~# k logs pods/nginx --tail 10

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: info: can not modify /etc/nginx/conf.d/default.conf (read-only file system?)

/docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

2024/09/02 19:35:05 [warn] 1#1: the "user" directive makes sense only if the master process runs with super-user privileges, ignored in /etc/nginx/nginx.conf:2

nginx: [warn] the "user" directive makes sense only if the master process runs with super-user privileges, ignored in /etc/nginx/nginx.conf:2

2024/09/02 19:35:05 [emerg] 1#1: mkdir() "/var/cache/nginx/client_temp" failed (30: Read-only file system)

nginx: [emerg] mkdir() "/var/cache/nginx/client_temp" failed (30: Read-only file system)

Siguiendo las reglas perdimos algunos permisos que eran contornados por ser usuario root y pusimos el filesystem como readonly.

Necesitamos que nginx funcione de esta manera, podríamos usar una imagen preparada para el usuario como vimos anteriormente, pero vamos a intentar resolver.

En todos los directorios que necesite escribir podemos crear un emptydir para resolver el problema.

Para la configuración de nginx podemos crear un configmap que será en nginx el /etc/nginx/conf.d/ donde buscará las configuraciones de nginx y crear otros directorios para que nginx escriba.

root@cks-master:~# vim configmap.yaml

root@cks-master:~# cat configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: nginx-config-map

data:

default.conf: |

server {

listen 8080;

server_name localhost;

location / {

root /usr/share/nginx/html;

index index.html;

}

}

root@cks-master:~# k apply -f configmap.yaml

configmap/nginx-config-map created

root@cks-master:~# vim nginx.yaml

root@cks-master:~# cat nginx.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: nginx

name: nginx

spec:

serviceAccountName: nginx

containers:

- image: nginx

name: nginx

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

securityContext:

runAsNonRoot: true

runAsUser: 10001

readOnlyRootFilesystem: true

capabilities:

drop:

- ALL

volumeMounts:

- name: nginx-cache

mountPath: /var/cache/nginx

- name: nginx-config

mountPath: /etc/nginx/conf.d/default.conf

subPath: default.conf

- name: nginx-run

mountPath: /var/run/

volumes:

- name: nginx-cache

emptyDir: {}

- name: nginx-config

configMap:

name: nginx-config-map

- name: nginx-run

emptyDir: {}

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}

root@cks-master:~# k apply -f nginx.yaml

pod/nginx created

root@cks-master:~# k get pods

NAME READY STATUS RESTARTS AGE

nginx 1/1 Running 0 3s

Volviendo al asunto del apparmor y seccomp, vamos a resolver solo para quedar bonito!

root@cks-master:~# vim nginx.yaml

root@cks-master:~# cat nginx.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: nginx

name: nginx

# Añadiendo esas annotations como pidió el kubesec

annotations:

container.seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

container.apparmor.security.beta.kubernetes.io/nginx: 'runtime/default'

spec:

serviceAccountName: nginx

containers:

- image: nginx

name: nginx

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

securityContext:

runAsNonRoot: true

runAsUser: 10001

readOnlyRootFilesystem: true

capabilities:

drop:

- ALL

# Al aplicar el pod observamos que a partir de 1.30 la annotation será deprecada referente al apparmor y probablemente kubesec tendrá que ajustarse para mirar aquí.

appArmorProfile:

type: RuntimeDefault

volumeMounts:

- name: nginx-cache

mountPath: /var/cache/nginx

- name: nginx-config

mountPath: /etc/nginx/conf.d/default.conf

subPath: default.conf

- name: nginx-run

mountPath: /var/run/

volumes:

- name: nginx-cache

emptyDir: {}

- name: nginx-config

configMap:

name: nginx-config-map

- name: nginx-run

emptyDir: {}

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}

# Resuelto con puntuación máxima

root@cks-master:~# docker run -i kubesec/kubesec:512c5e0 scan /dev/stdin < nginx.yaml

[

{

"object": "Pod/nginx.default",

"valid": true,

"message": "Passed with a score of 16 points",

"score": 16,

"scoring": {}

}

]

root@cks-master:~# k apply -f nginx.yaml

pod/nginx created

root@cks-master:~# k get pods

NAME READY STATUS RESTARTS AGE

nginx 1/1 Running 0 5s

root@cks-master:~# k logs pods/nginx --tail 5

2024/09/02 19:54:03 [notice] 1#1: OS: Linux 5.15.0-1067-gcp

2024/09/02 19:54:03 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 1048576:1048576

2024/09/02 19:54:03 [notice] 1#1: start worker processes

2024/09/02 19:54:03 [notice] 1#1: start worker process 21

2024/09/02 19:54:03 [notice] 1#1: start worker process 22

Podemos instalar el binario y hacer lo mismo

root@cks-master:~# wget https://github.com/controlplaneio/kubesec/releases/download/v2.14.1/kubesec_linux_amd64.tar.gz

netes-server-linux-amd64.tar.gz kubesec_linux_amd64.tar.gz

root@cks-master:~# tar -xzvf kubesec_linux_amd64.tar.gz

CHANGELOG.md

LICENSE

README.md

kubesec

root@cks-master:~# chmod +x kubesec

root@cks-master:~# mv kubesec /usr/local/bin/

root@cks-master:~# kubesec version

version 2.14.1

git commit 2d31e51659470451e6c51cb47be1df9e103fb49d

build date 2024-08-19T11:48:06Z

# Solo para comparar con la versión del docker, no conseguimos, probablemente ese container fue buildado directamente con el proyecto.

# Mirando en la página dockerhub en kubesec vimos que esa imagen tiene 5 años y está en la documentación oficial de la herramienta. https://hub.docker.com/r/kubesec/kubesec/tags?page=&page_size=&ordering=&name=512c5e0

root@cks-master:~# docker run -i kubesec/kubesec:512c5e0 version

version unknown

git commit unknown

build date unknown

# Sería la misma salida que el comando abajo, cambiando para la versión más nueva.

# root@cks-master:~# docker run -i kubesec/kubesec:v2.14.1 scan /dev/stdin < nginx.yaml

root@cks-master:~# kubesec scan nginx.yaml

[

{

"object": "Pod/nginx.default",

"valid": true,

"fileName": "nginx.yaml",

"message": "Passed with a score of 16 points",

"score": 16,

"scoring": {

"passed": [

{

"id": "ApparmorAny",

"selector": ".metadata .annotations .\"container.apparmor.security.beta.kubernetes.io/nginx\"",

"reason": "Well defined AppArmor policies may provide greater protection from unknown threats. WARNING: NOT PRODUCTION READY",

"points": 3

},

{

"id": "ServiceAccountName",

"selector": ".spec .serviceAccountName",

"reason": "Service accounts restrict Kubernetes API access and should be configured with least privilege",

"points": 3

},

{

"id": "SeccompAny",

"selector": ".metadata .annotations .\"container.seccomp.security.alpha.kubernetes.io/pod\"",

"reason": "Seccomp profiles set minimum privilege and secure against unknown threats",

"points": 1

},

{

"id": "RunAsNonRoot",

"selector": ".spec, .spec.containers[] | .securityContext .runAsNonRoot == true",

"reason": "Force the running image to run as a non-root user to ensure least privilege",

"points": 1

},

{

"id": "RunAsUser",

"selector": ".spec, .spec.containers[] | .securityContext .runAsUser -gt 10000",

"reason": "Run as a high-UID user to avoid conflicts with the host's users",

"points": 1

},

{

"id": "LimitsCPU",

"selector": "containers[] .resources .limits .cpu",

"reason": "Enforcing CPU limits prevents DOS via resource exhaustion",

"points": 1

},

{

"id": "LimitsMemory",

"selector": "containers[] .resources .limits .memory",

"reason": "Enforcing memory limits prevents DOS via resource exhaustion",

"points": 1

},

{

"id": "RequestsCPU",

"selector": "containers[] .resources .requests .cpu",

"reason": "Enforcing CPU requests aids a fair balancing of resources across the cluster",

"points": 1

},

{

"id": "RequestsMemory",

"selector": "containers[] .resources .requests .memory",

"reason": "Enforcing memory requests aids a fair balancing of resources across the cluster",

"points": 1

},

{

"id": "CapDropAny",

"selector": "containers[] .securityContext .capabilities .drop",

"reason": "Reducing kernel capabilities available to a container limits its attack surface",

"points": 1

},

{

"id": "CapDropAll",

"selector": "containers[] .securityContext .capabilities .drop | index(\"ALL\")",

"reason": "Drop all capabilities and add only those required to reduce syscall attack surface",

"points": 1

},

{

"id": "ReadOnlyRootFilesystem",

"selector": "containers[] .securityContext .readOnlyRootFilesystem == true",

"reason": "An immutable root filesystem can prevent malicious binaries being added to PATH and increase attack cost",

"points": 1

}

],

"advise": [

{

"id": "AutomountServiceAccountToken",

"selector": ".spec .automountServiceAccountToken == false",

"reason": "Disabling the automounting of Service Account Token reduces the attack surface of the API server",

"points": 1

},

{

"id": "RunAsGroup",

"selector": ".spec, .spec.containers[] | .securityContext .runAsGroup -gt 10000",

"reason": "Run as a high-UID group to avoid conflicts with the host's groups",

"points": 1

}

]

}

}

]

Acabamos encontrando más mejoras. Podemos observar que ahora muestra los ítems que pasaron, pero ya tenemos dos nuevos advises.

root@cks-master:~# vim nginx.yaml

root@cks-master:~# cat nginx.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: nginx

name: nginx

annotations:

container.seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

container.apparmor.security.beta.kubernetes.io/nginx: 'runtime/default'

spec:

serviceAccountName: nginx

automountServiceAccountToken: false # Añadido

containers:

- image: nginx

name: nginx

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

securityContext:

runAsNonRoot: true

runAsUser: 10001

runAsGroup: 10001 # Añadido

readOnlyRootFilesystem: true

capabilities:

drop:

- ALL

appArmorProfile:

type: RuntimeDefault

volumeMounts:

- name: nginx-cache

mountPath: /var/cache/nginx

- name: nginx-config

mountPath: /etc/nginx/conf.d/default.conf

subPath: default.conf

- name: nginx-run

mountPath: /var/run/

volumes:

- name: nginx-cache

emptyDir: {}

- name: nginx-config

configMap:

name: nginx-config-map

- name: nginx-run

emptyDir: {}

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}

root@cks-master:~# kubesec scan nginx.yaml

[

{

"object": "Pod/nginx.default",

"valid": true,

"fileName": "nginx.yaml",

"message": "Passed with a score of 18 points",

"score": 18,

"scoring": {

"passed": [

{

"id": "ApparmorAny",

"selector": ".metadata .annotations .\"container.apparmor.security.beta.kubernetes.io/nginx\"",

"reason": "Well defined AppArmor policies may provide greater protection from unknown threats. WARNING: NOT PRODUCTION READY",

"points": 3

},

{

"id": "ServiceAccountName",

"selector": ".spec .serviceAccountName",

"reason": "Service accounts restrict Kubernetes API access and should be configured with least privilege",

"points": 3

},

{

"id": "SeccompAny",

"selector": ".metadata .annotations .\"container.seccomp.security.alpha.kubernetes.io/pod\"",

"reason": "Seccomp profiles set minimum privilege and secure against unknown threats",

"points": 1

},

{

"id": "AutomountServiceAccountToken",

"selector": ".spec .automountServiceAccountToken == false",

"reason": "Disabling the automounting of Service Account Token reduces the attack surface of the API server",

"points": 1

},

{

"id": "RunAsGroup",

"selector": ".spec, .spec.containers[] | .securityContext .runAsGroup -gt 10000",

"reason": "Run as a high-UID group to avoid conflicts with the host's groups",

"points": 1

},

{

"id": "RunAsNonRoot",

"selector": ".spec, .spec.containers[] | .securityContext .runAsNonRoot == true",

"reason": "Force the running image to run as a non-root user to ensure least privilege",

"points": 1

},

{

"id": "RunAsUser",

"selector": ".spec, .spec.containers[] | .securityContext .runAsUser -gt 10000",

"reason": "Run as a high-UID user to avoid conflicts with the host's users",

"points": 1

},

{

"id": "LimitsCPU",

"selector": "containers[] .resources .limits .cpu",

"reason": "Enforcing CPU limits prevents DOS via resource exhaustion",

"points": 1

},

{

"id": "LimitsMemory",

"selector": "containers[] .resources .limits .memory",

"reason": "Enforcing memory limits prevents DOS via resource exhaustion",

"points": 1

},

{

"id": "RequestsCPU",

"selector": "containers[] .resources .requests .cpu",

"reason": "Enforcing CPU requests aids a fair balancing of resources across the cluster",

"points": 1

},

{

"id": "RequestsMemory",

"selector": "containers[] .resources .requests .memory",

"reason": "Enforcing memory requests aids a fair balancing of resources across the cluster",

"points": 1

},

{

"id": "CapDropAny",

"selector": "containers[] .securityContext .capabilities .drop",

"reason": "Reducing kernel capabilities available to a container limits its attack surface",

"points": 1

},

{

"id": "CapDropAll",

"selector": "containers[] .securityContext .capabilities .drop | index(\"ALL\")",

"reason": "Drop all capabilities and add only those required to reduce syscall attack surface",

"points": 1

},

{

"id": "ReadOnlyRootFilesystem",

"selector": "containers[] .securityContext .readOnlyRootFilesystem == true",

"reason": "An immutable root filesystem can prevent malicious binaries being added to PATH and increase attack cost",

"points": 1

}

]

}

}

]

root@cks-master:~# k apply -f nginx.yaml

pod/nginx created

root@cks-master:~# k get pods

NAME READY STATUS RESTARTS AGE

nginx 1/1 Running 0 3s